Building a Lever on Time

World models will allow us to run thousands of realities in parallel

Welcome to this update from New World Same Humans, a newsletter on trends, technology, and society by David Mattin.

If you’re reading this and haven’t yet subscribed, join 30,000+ curious souls on a journey to build a better future 🚀🔮

World models are having a moment. This week, a quick thought on where they’re taking us.

World models are AI systems that accurately simulate physical reality. How objects move and collide, for example, or how light behaves, or liquid flows. Google DeepMind recently released the astonishing new Genie 3 model; Demis Hassabis calls world models a crucial part of anything we can call true AGI. OpenAI is rumoured to be building a rival. Yann LeCun — Meta's AI chief for years — has left the company to launch his own world model startup.

I recently wrote about all this for Global Macro Investor. My research for that essay kept leading me back to a simple and strange idea. One I want to share here.

Time is, in some deep sense, the ultimate constraint. Everything we want to do — understand, build, create — must happen inside time. Want to run an experiment on a new material? That takes time. Want to train a robot to navigate new spaces? You let it fail over and again; that takes a lot of time. You’re trying to discover a new therapeutic molecule? You run experiments for months, or years.

Time is the container for all human effort. And it’s the medium we can’t escape. Everyone has the same 24 hours.

But now, that can change. World models can simulate physical reality with increasing accuracy. And when you can simulate reality, you can run thousands of realities at once. The result is massive acceleration. A century of robot training, compressed into a week. A decade of materials science, compressed into an afternoon. A thousand crash tests, done in an hour.

DeepMind's Genie 3 can generate playable, interactive 3D worlds from a single text description. Describe the video game you want, and it's ready to play. But these worlds can also be used as simulated realities, in which we can do all kinds of science; DeepMind is already using these new worlds as environments in which to train next-generation robots.

What’s happening here? At some deep level, it’s this: we are learning how to convert energy and compute into time. World models are machines for generating synthetic time. They do that by allowing us to create multiple realities that we can run in parallel. We can then mine those realities for knowledge, and import it back into this world.

In other words: world models are a lever on time.

We’ve always known that energy can be converted into other things. Heat, light, motion. Now add time to the list. This can mean explosive progress in everything from drug discovery to robotics to materials science. So many processes can be accelerated.

As so often when it comes to what is made possible my machine intelligence, the challenge will be our ability to process all this.

When we can play out 1,000 years of physical reality in a few minutes, what deep patterns become available to us? How do we find the time to explore them all? Will we even be able to understand them? Or will the machines simply do that part for us, too?

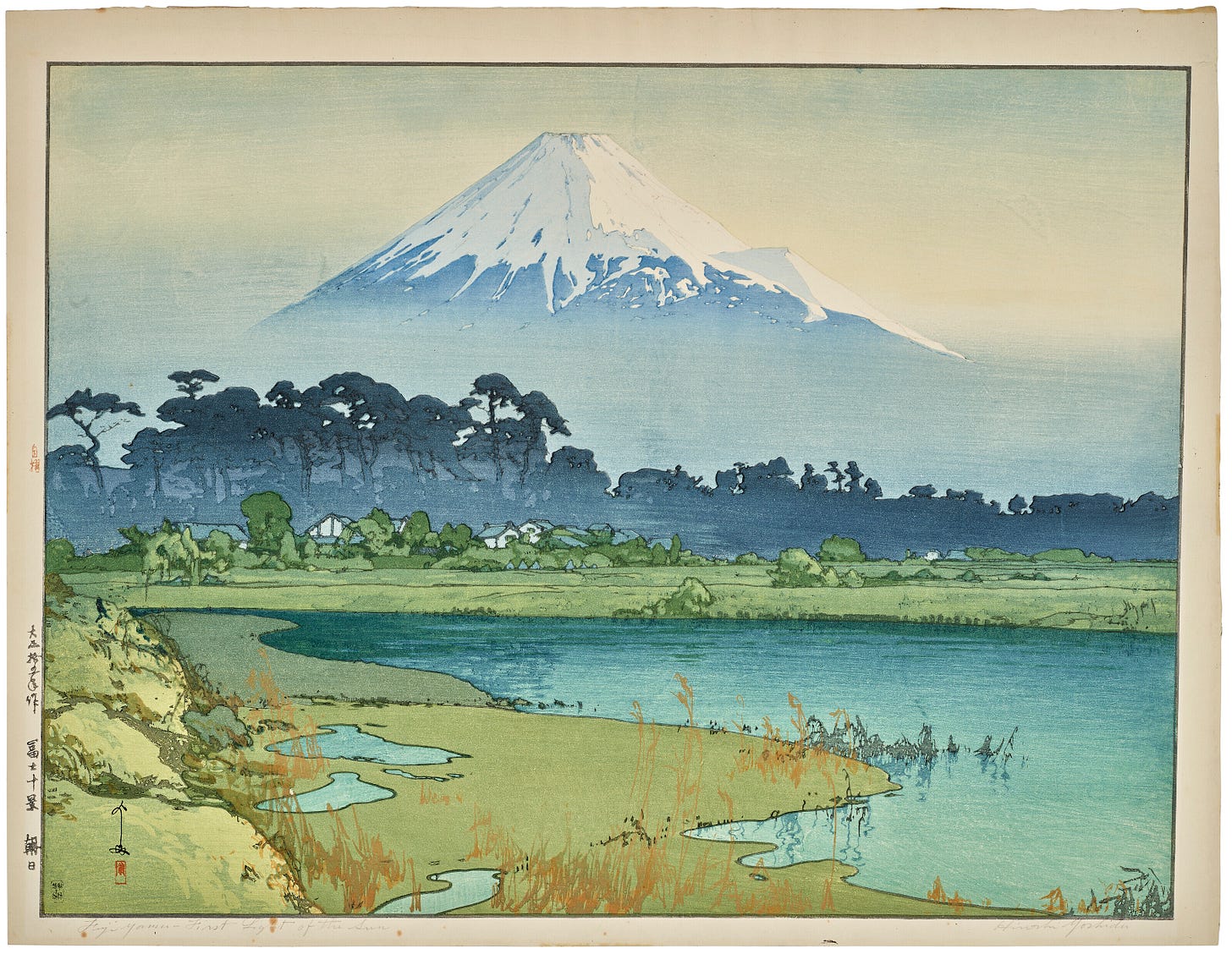

This was #23 in the series Postcards from the New World, from NWSH. The title artwork is a print by the 20th-century Japanese artist Hiroshi Yoshida.

Models are by definition a simplification of reality, but having said that, our understanding of reality is a model in itself. Creating a multitude of reality models and run experiments altering one variable in each makes a lot of sense to me and as David alluded will accelerate science.

I'm still in the camp that models are dead, based on data from only the past.

They are by definition not alive -- incapable of receiving new information without starting over again from scratch like an LLM going through full model training. They cannot capture a future influenced by adjacent possibles and the new system dynamics that emerge from evolving interactions in different contexts at different scales.